The Death Star Problem

A long time ago in a galaxy far, far away…

TL;DR

- The Death Star was destroyed by a single catastrophic vulnerability

- Modern, centralised internet communications are vulnerable in a similar way

- Encryption combined with decentralisation must be used to protect against this

- Tools exist but need to be made more user-friendly for people to use them

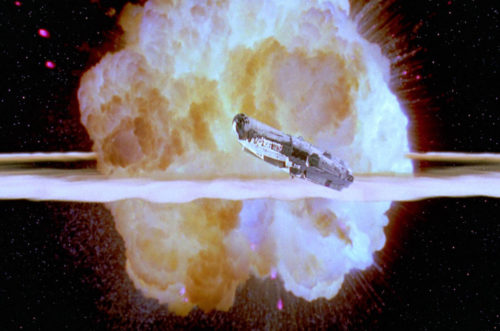

It has become so common as to have become a trope – the “bad guys” have built an extremely complex and effective weapons system which is indestructible but for one tiny weakness. Of course, that one weakness is often in an inexplicably vulnerable location and attacking it has the effect of completely destroying the entire weapons system. The death stars from the Star Wars movies have come to symbolise this concept in pop culture, and I am thankful for that because far more people have seen Star Wars than can point out the information security flaws of many of our commonly-used internet services, even though they boil down to essentially the same thing – a single point of failure.

Believe it or not, the original idea of the internet was to prevent the death star scenario. It was designed to decentralise the storage of information so that in the event of a nuclear war, an enemy couldn’t wipe out “the brains” of the military (an oxymoron?) with one or two well-placed proton torpedoes.

Not so long ago in a galaxy rather nearby…

In the wake of the Snowden revelations, many tech companies took steps to encrypt their lines of communication. Google for example now encrypts all data moving between its data centers, and insists on secure SSL/TLS encryption between your browser and its servers when you access your emails. Encryption is wonderful of course, since without it we’re basically sending packets of information around on postcards that literally anyone can intercept and read without being detected. But is encryption enough?

The short answer to that is, predictably enough, no. Let’s take a classic example – Facebook. I actually remember the heady days when Facebook was still cool, before investors, money, and business models caused them to commodify their users into marketing cattle, to be experimented on unethically at will in the pursuit of profit. Back then, we were labouring under the illusion that our information was really ours, and when we marked a post as “friends only” then only our friends would be able to see it. Turns out Facebook was the world’s biggest man-in-the-middle attack. But let’s just say for argument’s sake that Facebook are good (they’re not) and that we can completely trust them with our data (we can’t) and on top of that, we can trust them to not collude with US intelligence and large corporations to mine our data (we can’t), there’s still a fundamental problem with the way this system is structured, and that is the death star problem.

The real problem here is that even if we take the leap of faith and assume that all of these people are good, we’re still left with the problem that huge chunks of very detailed data on us is sitting in these huge virtual warehouses. Now I trust that the IT department of a tech behemoth like Google or Facebook is very good at keeping data secure (after all, we wouldn’t want our corporate competitors getting a leg up), but they’re on the losing side of a battle of resources. US and British intelligence have famously thrown sometimes unbelievable amounts of resources at difficult computing problems which were designed to protect our privacy (see what they did with logjam), just to spy on their own citizens, one can only guess at what the Chinese, Russians, etc. would be up to in this regard when they would have very obvious reasons for trying to break into Google and Facebook data centers.

Trust no one, encrypt everything

The first step is to encrypt everything. This is already proving to be difficult. When network protocols were written, security wasn’t a priority – back then “make it work” was the first priority, followed closely by “make it work faster”. Thankfully, the mathematics already exists for us to be able to strongly encrypt everything we might ever need to communicate, in almost any situation we would need to communicate it. The strength of modern encryption systems depends to an extent on mathematics problems which are difficult to solve, for example RSA – one of the most widely-used encryption systems relies on very large numbers being easy to multiply together, but for the result to be many times more difficult to separate into the original two starting numbers. This allows a very modestly-powered computer (like someone’s phone) to be able to encrypt something that the world’s most powerful computers would take months or years to decrypt without the correct keys.

But what about these death stars floating around in cyber space?

But what about these death stars floating around in cyber space? (this entire article basically exists so that I could use that line) Even with encryption, we’ve seen computing power increase exponentially in less than a generation. Old stored data, encrypted using old keys – keys which would have taken the world’s computers years to crack ten years ago, now crackable in a few weeks with a modern supercomputer – and it only takes one crack (I’m on fire) to expose everything. Think about what gets stored in emails – login details, passwords, even cryptographic keys.

Decentralise

The answer to the death star problem is the same as the motivation behind the creation of the internet – decentralisation. Everyone encrypts at the “endpoints” (these are the users) rather than at the central node (the death star… er… I mean Google). This way, if Hotmail, Yahoo, Google, etc. are compromised, they’ll only see encrypted gibberish. To get at the keys, they would either need to track down and target every single user, which is rather time-consuming, or sit there with massive computers and individually try to mathematically crack all of the ciphertext which has been encrypted not with a single key, but with as many different keys as there are users.

Amazingly, the tools to be able to do this already exist. What is perhaps a little bit unfortunate is that they aren’t what normal people would describe as “user friendly’. Many of these tools were also designed in the days of the early internet, when visionary programmers saw the need for strong encryption in the hands of everyday internet users. Of course, back in those days (PGP was created in 1991, RSA dates back to 1977) “everyday internet users” worked at places like MIT and CERN and had computer science degrees, and user interface design hadn’t yet become a thing. Sadly, not too much has changed, and as recently as 2005, there was a research paper looking into the lack of usability of encryption systems which concluded that they were unusable for ordinary people.

Slowly but surely the landscape is changing. Encrypted messaging apps like Signal, Wire, Telegram, CryptoCat, and WhatsApp have led the way with user-friendly strong encryption which often also features something called “perfect forward secrecy”. That’s a fancy way of saying that if an attacker compromises one message, the keys obtained won’t unlock previous messages. (As an aside, even these apps suffer from the death star problem, since messages and metadata are are held on centralised messaging servers. Currently, Ricochet is one of only a handful of currently-maintained messaging tools that is also decentralised.)

But what about her emails…?

This is all very well, and these messaging apps have undoubtedly saved the lives of many activists and journalists, as well as kept the intimate photos sent between lovers (or almost-lovers, at least until the photos were sent) protected from prying eyes, but messaging apps aren’t the only way that people communicate. Email is still king when it comes to important communications, and perfect forward secrecy is far less desirable in the world of email. In the world of instant messaging, messages very quickly become less important, whereas in the world of email, it isn’t unusual to need to hold on to an old email because of a receipt, or for a record of a past event. We use email, in essence, in the way we used to use real (snail) mail.

Currently, the most user-friendly way to send and receive encrypted email is by using PGP/GPG with Thunderbird mail combined with the Enigmail plugin. To be fair, it is worlds easier to set up this combination than some of the older combinations of software, or *gasp* doing everything from the command line – for those who don’t know what that is, think of the old MS-DOS with blinking green cursors on monochrome screens (if you don’t know what this is, be thankful). However, if my guide to setting it up on OSX is any indication, it’s still not particularly easy, not easy enough to make everyone switch the next time there’s a “Sony hack” or “Clinton Emails” leak.

Even if you manage to set up encrypted email on your computer, modern systems suffer a serious design flaw which heavily impacts usability. You see, what happens is you have a public key and a private key. Think of the public key as those two big numbers I talked about earlier, and the private key is what you get when you multiply those numbers together. The public key is used to encrypt messages to you, and as the name suggests, it is known to the public (ordinarily posted somewhere where people can simply download it and use it). The private key is kept on your computer and kept secret, and is usually protected by a passphrase.

Where did I leave my keys?

The first problem is keeping a private key on an everyday-use computer isn’t very secure. You get a lot of dodgy attachments in emails, the occasional virus, and so on. Look at all the hacks that happen on the news, emails from executives leaked online, celebrity nudes, and so on. Most of these come about because of compromised email accounts, and compromised email accounts can very quickly lead to compromised computers (think about your secret password recovery questions, and think about how easy that information might be to figure out if someone was to read all the emails you’ve ever sent. a lot of people also send passwords in unencrypted emails).

The second and perhaps more obvious problem is that nobody checks their email from only one device anymore. Today you probably read your emails on at least two devices. You would need to keep your private key on your computer and whichever other devices you use to check your email, otherwise be stuck in the situation where, if you receive an encrypted email, you have to go to a specific device just to be able to read it (this is currently still the norm in the hacker community). Having your private key on multiple devices increases the chance of your private key being compromised (smartphones are generally not very secure), and having to go to the same computer or device to read encrypted email is a pain.

It’s not a cloud – it’s someone else’s computer

Someone once suggested putting all this in The Cloud as a solution to the many devices problem. From a technical point of view, that’s a terrible idea. The Cloud is one of the strangest victories in marketing I’ve ever witnessed – you put something in a cloud and you can access it from anywhere on any device, or use our software as a service from anywhere – how convenient, what’s the catch? It’s not a cloud – it’s someone else’s computer! It’s not a meteorological phenomenon, it’s the property of a company which is using you for profit, with computers which probably exist in a jurisdiction where a government can legally, secretly walk in and ask for all the encryption keys, like what happened with Lavabit. What you’re really doing is paying to put your stuff on someone else’s computer and paying them to keep it constantly turned on and connected to the internet. All other problems aside, this once again suffers from the death star problem.

Trust your own devices

So what’s the answer? One computer that’s always on, but not owned by someone else – your own “mini cloud” so to speak. A secure box to store your encryption keys. Software that’s open-source so anyone can check the inner workings for back doors and security flaws. A little device to leave around the house, like your router, or media drive, that’s always connected and that you can always securely connect to from any device with an internet connection. Curiously enough, such things already exist, with Cloudfleet and FreedomBox being examples. So why isn’t everyone using one… come to think of it, why hasn’t anyone even heard of these things?

The last piece of the puzzle, and the one most often ignored is the aspect of usability. Many, indeed most, technological solutions to problems are built by technical computer specialists (and unwittingly) for other computer specialists. Centralised, insecure-by-design services which we have come to rely on as part of our everyday lives have become tremendously convenient things. In the early days of the internet, this was the only way to deliver a good email service at scale – who remembers how excited we all got back in 2004 when we received our first gmail invites, and basked in the freedom of having a whole gigabyte of storage space accessible from anywhere with an internet connection? In the time since, these email providers have become very polished products. Some, like protonmail, even go so far as to offer strong encryption (but as you have no doubt figured out by now, since all of protonmail’s pgp keys are stored in the same place, it also suffers from the death star problem).

Make it foolproof, so even fools can use it

People didn’t adopt the use of computers in huge numbers because processor speeds went up, or because they all suddenly became connected to the internet. It happened when people were able to do things on computers which they had already been doing, only better. They were editing documents, sending mail, and chatting to friends, except these documents could be duplicated infinitely at no extra cost, the mail was much faster than regular mail, and you could chat to friends all over the world without worrying about poor line quality or astronomical international dialing fees. The key to usability and adoption is to make the interface so intuitive and unobtrusive that the user effectively forgets that there’s a computer involved.

The key to usability and adoption is to make the interface so intuitive and unobtrusive that the user effectively forgets that there’s a computer involved.

Despite the previous 2400 words of this essay, nobody is really that interested in the finer details of how their emails get to them. They want to be able to read and reply to their mail whenever they want, wherever they are. There is an underlying assumption that when you send an email or a message, the only people who can read it are you and the intended recipient. Not your neighbours (relay servers), not the postal company (email providers), not the delivery infrastructure (internet service providers), not the government (the government), and certainly not a foreign government. We now know that this trust has been betrayed. Encouragingly, we also know that the means exist for us to protect ourselves, and efforts are being made to make these tools more accessible to everyday users. But it is also incumbent on users to be informed of the pitfalls of commonly used internet communication services, and to demand better. Without this protection, we can’t have privacy, and without privacy, what little freedom we really have in the world is curtailed.

Leave a comment